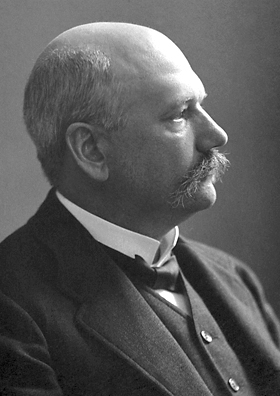

101 questions with a bioinformatician #0: Ian Korf

This post is part of a series that interviews some notable bioinformaticians to get their views on various aspects of bioinformatics research. Hopefully these answers will prove useful to others in the field, especially to those who are just starting their bioinformatics careers.

Ian Korf is the Associate Director of the UC Davis Genome Center and interim manager of the Genome Center Bioinformatics Core facility. He also mentioned that "According to the cake I was given after getting tenure, my title is also 'ASS PRO' (there wasn't enough room to write Associate Professor"). He also asks to "Please just call me Ian. If you call me Dr. Korf, I'll think you're talking about my dad (the in-famous Mycologist)".

You can find out more about Ian from the Korf Lab website and from his new SuperScience and Sorcery blog. Ian is also on twitter as @iankorf. Careful though, you might find his constant tweeting a bit distracting.

And now, on to the 101 questions...

001. What's something that you enjoy about current bioinformatics research?

The constant innovation. There's are so many problems to solve and so much cleverness out there.

010. What's something that you *don't* enjoy about current bioinformatics research?

The constant innovation. Many people are inventing the same thing and giving it a different name. Redundancy is sometimes unavoidable (but also sometimes useful).

011. If you could go back in time and visit yourself as a 18 year old, what single piece of advice would you give yourself to help your future bioinformatics career?

Be nicer to everyone. Science is a social activity. When choosing between being correct and being agreeable, I generally tend towards correctness. Sometimes it's necessary to beat someone with the club of correctness (sounds like a Munchkin card), but more and more I think it's better to walk a little off the optimal path if you can walk there with someone.

100. What's your all-time favorite piece of bioinformatics software?

BLAST, for many reasons. It has great historical importance and is still relevant today. It has a lot of educational value because it has a mixture of rigorous theory and rational heuristics.

101. IUPAC describes a set of 18 single-character nucleotide codes that can represent a DNA base: which one best reflects your personality?

That website has only 15 (U is for RNA and gaps do not symbolize letters - see Karlin-Altschul statistics). But to answer the question, I think B, because B is a lot of things, but not A.