DOGMA: a new tool for assessing the quality of proteomes and transcriptomes

A new tool, recently published in Nucleic Acids Research, caught my eye this week:

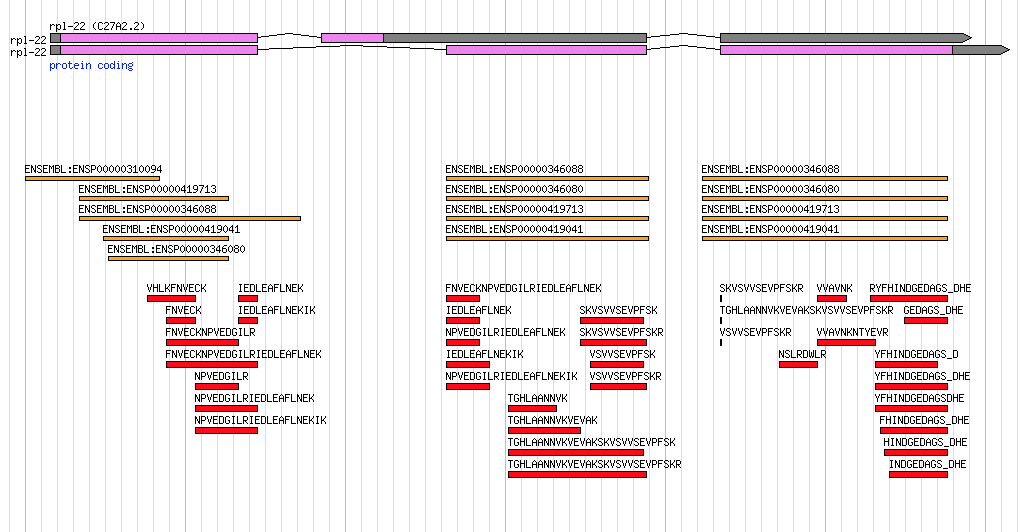

The tool, by a team from the University of Münster, uses protein domains and domain arrangements in order to assess 'completeness' of a proteome or transcriptome. From the abstract…

Even in the era of next generation sequencing, in which bioinformatics tools abound, annotating transcriptomes and proteomes remains a challenge. This can have major implications for the reliability of studies based on these datasets. Therefore, quality assessment represents a crucial step prior to downstream analyses on novel transcriptomes and proteomes. DOGMA allows such a quality assessment to be carried out. The data of interest are evaluated based on a comparison with a core set of conserved protein domains and domain arrangements. Depending on the studied species, DOGMA offers precomputed core sets for different phylogenetic clades

Unlike CEGMA and BUSCO, which run against unannotated assemblies, DOGMA first requires a set of gene annotations. The paper focuses on the web server version of DOGMA but you can also access the source code online.

It's good to see that other groups are continuing to look at new ways of asssessing the quality of large genome/transcriptome/proteome datasets.

What's in a name?

Initially, I thought the name was just a word that both echoed 'CEGMA' and reinforced the central dogma of molecular biology. Hooray I thought, a bioinformatics tool that just has a regular word as a name without relying on contrived acronyms.

Then I saw the website…

- DOGMA: DOmain-based General Measure for transcriptome and proteome quality Assessment

This is even more tenuous than the older, unrelated, version of DOGMA:

- DOGMA: Dual Organellar GenoMe Annotator